Case Study: Digital Mental Health Study

- Genesis

- 7 days ago

- 4 min read

Updated: 5 days ago

My role: UX Researcher/ UX Research Operations Analyst

Timeline: 1 year

Introduction

The UCLA Depression Grand Challenge (DGC) is a campus initiative to transform understanding of depression and make effective treatments. Research efforts are focused on discovering depression causes and biology to develop targeted treatments. The DGC conducts various studies including the National Digital Mental Health Study (NDMH).

NDMH is a digital sensing study, in collaboration with Apple, to obtain objective health measures, including physical and mental health activity, sleep, and iOS device usage and interaction, and examine these factors in relation to symptoms of depression and anxiety.

Study Overview

Recruit 600 participants to participate in 6 months of data collection, including:

Daily Apple Watch wear

Completing tasks with Apple Health and Research applications

Responding to questionnaires

Study Timeline and Tasks

Participants were assigned daily, biweekly, and periodic tasks (every 8 weeks) to complete on their devices.

To get a better idea of the volume of data collected, here is a visualization of the data that was compiled over a 6 month period.

User Profile

Like the name of the study implies, 600 participants were recruited nationally.

The study recruitment was unique since there was no targeted user profile. Instead, one of the goals was to collect data from a diverse background of participants.

Eligibility factors included:

Current symptoms of depression

Age

Sex

Race

Ethnicity

Height and weight

State of residence

Notice

On account that the study content is highly confidential, I am unable to showcase specific details of my work. However, I'd be happy to walk through my research approach and thought processes.

All information previously stated is publicly available here.

My Role

Due to the high volume of participants enrolled and data collected, I had a nontraditional UX Research role in this study. Instead, I had a hybrid role were I combined responsibilities of a UX Researcher and a UX Research Operations Analyst. I was responsible for instructing participants on how to perform the study tasks and ensuring that participants followed through with tasks until study completion.

Below is a brief summary of my responsibilities:

Onboard

Introduce study goals and tasks

Ensure participants understand how to perform and access study tasks

Troubleshoot

Diagnose technical issues

Provide recommendations for devices

Contact

Contact participants to address compliance

Ensure adherence to study tasks

Apply habit-formation principles

My Research Goals

With my responsibilities laid out, my goals were simple:

Goal 1

Ensure high compliance to study tasks, and retain and improve participant engagement.

Goal 2

Ensure high quality and consistent data collection throughout the study.

Methods

To meet and carry out my study responsibilities and goals, I conducted 3 main methods:

1) Onboarding sessions (With usability-style assessments)

2) Troubleshooting (Monitoring for missing data and diagnosing technical issues)

3) Contacting (Check-in phone calls)

I will review how I executed each method, the challenges I encountered, how I overcame the challenges, and outcomes from each method.

Method 1: Onboard Assessment with Usability-style Testing

Responsibilities

1.5 hour video conference to train participants on study tasks

Guided participants on how to access tasks on devices, when to complete tasks, and how to enable device settings

Observed participants perform tasks, determined user pain points, and provided support for seamless usability

Challenges | Approaches |

|

|

Outcomes

Improved user confidence and adoption, ensuring independent use beyond initial session

Onboarded participants with varying levels of technical experience, enabling participants with varying levels of digital literacy to participate effectively

Reduced friction and cognitive load, reducing early participant drop-off

Refined intake instructions based on recurring participant friction points

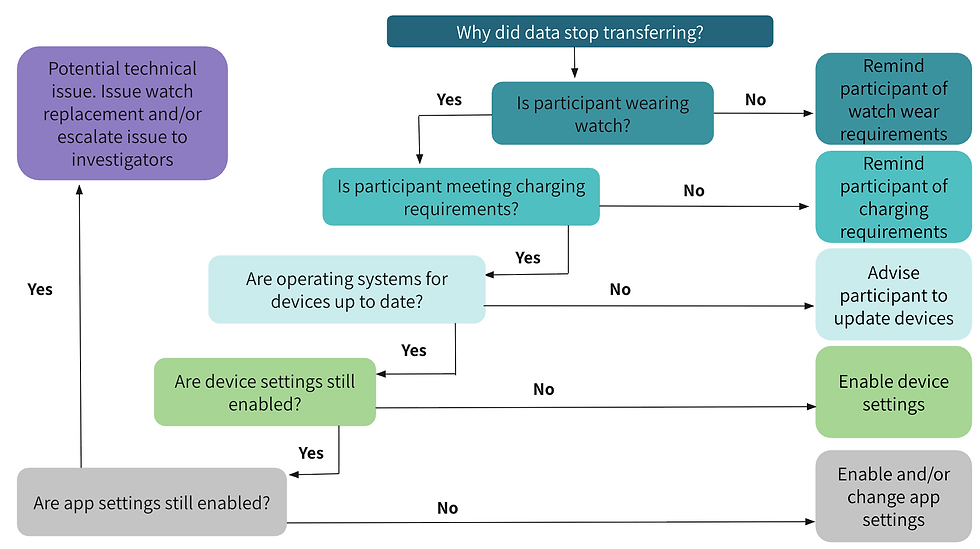

Method 2: Troubleshooting

Responsibilities

Reviewed multiple high volume data streams daily to detect non-compliant participants

Ensured participants continued to complete study tasks to collect as much data as possible

Emailed and/or called participants to remind them of task, or determine if they are experiencing technical issues

Challenges | Approaches |

|

|

Outcomes

Provided timely technical support that prevented participant drop-off and contributed to sustained long-term engagement

Reduced technical barriers proactively, ensuring consistent data capture and minimizing gaps in data collection

Streamlined troubleshooting approaches based on recurring technical issues, enabling faster resolution times across participants

Method 3: Contacting

Responsibilities

Brief phone call every 8 weeks to ask participants about their experience in the study

Opportunity to answer questions, discuss study progress, and collect feedback

Challenges | Approaches |

|

|

Outcomes

Created a sense of accountability and support through personalized communication, reinforcing participant’s commitment to the study

Mitigated missing or delayed data collection by implementing targeted strategies and reminders

Surfaced recurring usability challenges to investigators, informing product and research developments to keep users engaged

Sustained high compliance by combining behavioral insights with structured follow-up strategies to support participant adherence

Study Summary

Overall the study had satisfactory retention rates and positive participant feedback! Below is a summary of what I learned from the study and what I would do differently for future projects.

What I learned

What I would do differently